Table - Add Table - Add tables using a crawler Now, using an AWS Glue Crawler, perform the following steps to create a table within the database to store the raw JSON log data. Within the Data Catalogue, create a database These are detailed here:Īn S3 bucket where the transform script and Parquet table are stored = s3:///Ī temporary location for AWS Glue config = s3:///tempįrom the AWS Console, advance to the AWS Glue console.

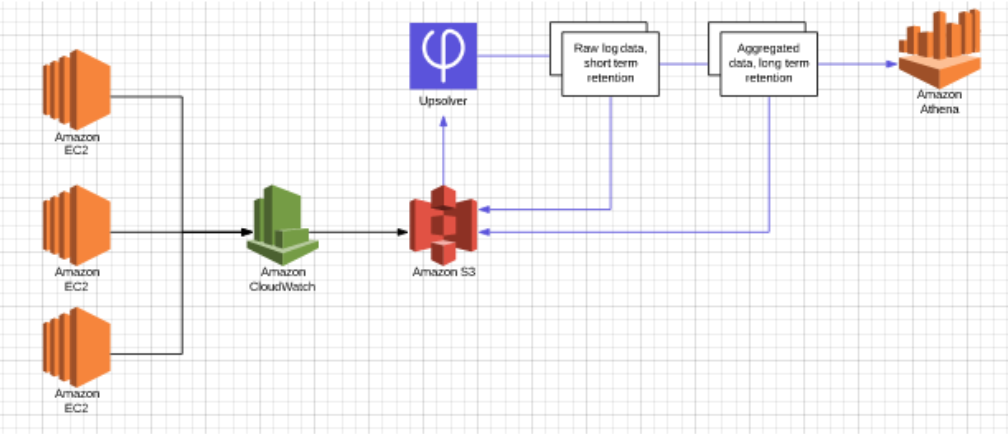

The Parquet formatted table is now ready to be queried via AWS AthenaĪs part of the process, 2 S3 buckets were required. Using a second AWS Crawler, create a Parquet formatted table Using an AWS Crawler, generate a table to store the raw JSON dataĭefine an ETL script, this will be used to re-structure the raw data Pre-requisitesĪ summary of the upcoming steps is listed below: Once catalogued, your data is immediately searchable, can be queried, and available for ETL.įor more information specifically on AWS Glue, click here. You simply point AWS Glue to your data stored on AWS, and AWS Glue discovers your data and stores the associated metadata (table definition and schema) in the AWS Glue Data Catalogue. You can create and run an ETL job with a few clicks in the AWS Management Console. What is AWS Glue?ĪWS Glue is a fully managed extract, transform, and load (ETL) service that makes it easy for customers to prepare and load their data for analytics. Below is a step by step guide on the process. In the end, AWS Glue was chosen as a valid way to tackle the problem. The raw logs contained JSON fields, which would necessitate overly complicated queries to generate useful output. This would cause problems in my case, as I was expecting certain reports to generate over 65,000 rows. However, upon further investigation, I quickly saw some drawbacks to this option:ĪWS Insights has an output row limit of 10,000. These logs were already being streamed to an AWS S3 bucket, and so I initially thought of simply interrogating the logs via AWS Insights. The log streams don't change during a failover and the error log stream of each node can contain error logs from primary or secondary instance.Recently I was asked to provide a quick, efficient and streamlined way of querying AWS CloudWatch Logs via the AWS Console. Is stored in the error log streams /aws/rds/instance/ my_instance.node1/error and /aws/rds/instance/ my_instance.node2/error For example, if you publish the error logs, the error data For example, if you publish error logs, error data is stored in an error logįor Multi-AZ DB instances, Amazon RDS publishes the database log as two separate streams in the log group. For more information, see Working with Amazon CloudWatch Logs in the Amazon Managed Service for Apache Flink forĪmazon RDS publishes each SQL Server database log as a separate database stream in the log Process log data in real time with Amazon Kinesis Data Streams. Stream data to Amazon OpenSearch Service. Store logs in highly durable storage space with a retention period that you With CloudWatch Logs, you can do the following: Analyze the log data with CloudWatch Logs, then use CloudWatch to create alarms and view With Amazon RDS for SQL Server, you can publish error and agent log events directly toĪmazon CloudWatch Logs. For more information, see Viewing error and agent logs. You can use the Amazon RDS stored procedure rds_read_error_log to view error Log by using the rds_read_error_log procedure To modify the dump file retention period for your DB Instance, see Setting the retention period for trace and dump files.ĭump files are retained according to the dump file retention To modify the trace file retention period for your DB Trace files are retained according to the trace file retention Amazon RDS might delete agent logs older than 7 days. Amazon RDS might delete error logs older than 7 days.Ī maximum of 10 agent logs are retained.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed